AI-Assisted Cloud Intrusion Achieves AWS Admin Access in 8 Minutes

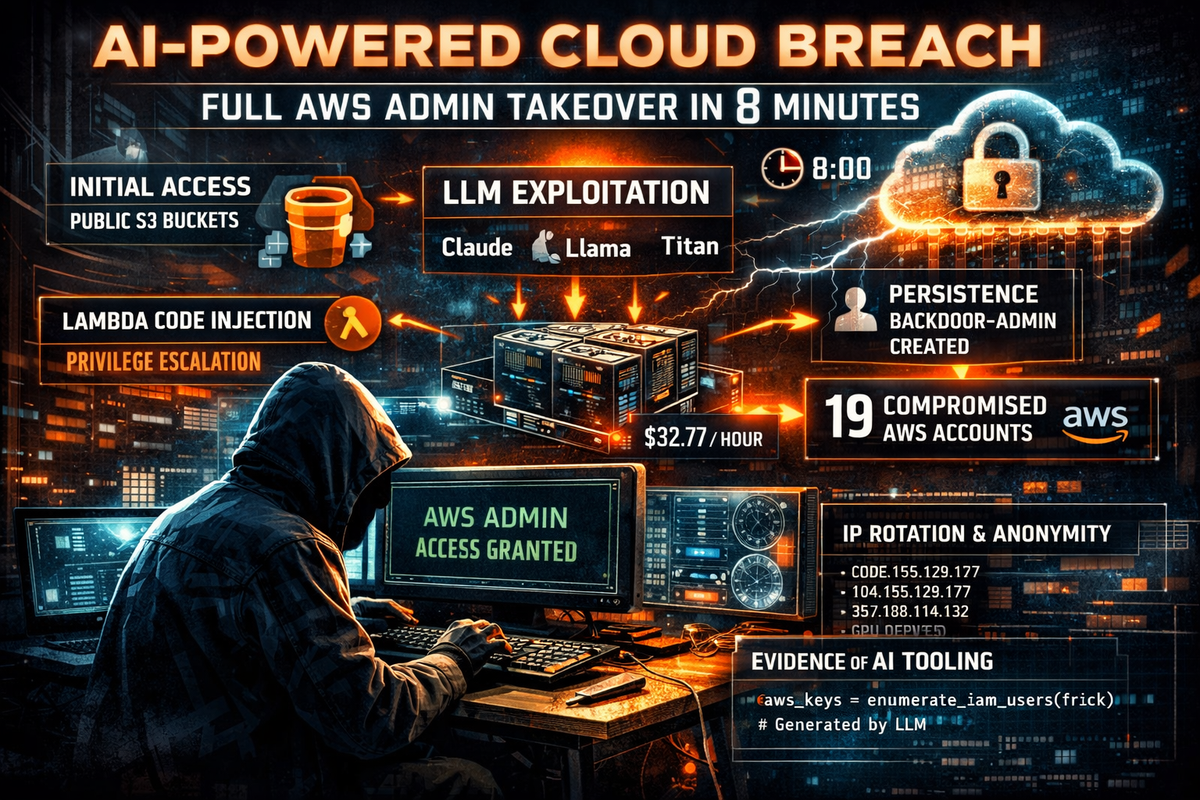

Sysdig's Threat Research Team has published a detailed analysis of a cloud intrusion in which a threat actor went from stolen credentials to full AWS administrative access in under 10 minutes — with strong indicators that large language models were used throughout the operation to automate reconnaissance, generate exploitation code, and make real-time decisions.

The attack, observed on November 28, 2025, compromised 19 unique AWS principals, abused Amazon Bedrock for LLMjacking, and spun up GPU instances costing $32.77/hour — all within a two-hour window before access was revoked.

Initial Access: Public S3 Buckets

The attacker gained entry through credentials discovered in publicly accessible S3 buckets. The buckets contained RAG (Retrieval-Augmented Generation) data for AI models and were named using common AI tool naming conventions that the attacker actively searched for during reconnaissance. The compromised credentials belonged to an IAM user with read/write permissions on Lambda and restricted Bedrock access — likely created by the victim organization to automate Bedrock tasks.

Privilege Escalation: Lambda Code Injection in 8 Minutes

After failing to assume roles with administrative names (admin, Administrator, sysadmin, netadmin), the attacker pivoted to Lambda function code injection. Because the compromised user had UpdateFunctionCode and UpdateFunctionConfiguration permissions, the attacker replaced the code of an existing Lambda function named EC2-init with a malicious payload.

The injected code — which contained comments written in Serbian and exhibited patterns consistent with LLM generation including comprehensive exception handling and rapid iteration — performed three operations: enumerated all IAM users with their access keys and policies, created new access keys for the admin user frick, and listed S3 bucket contents. The attacker also increased the Lambda timeout from 3 to 30 seconds to ensure the function completed execution.

The entire sequence from credential theft to successful admin key creation took eight minutes.

Lateral Movement: 19 Principals

With admin credentials in hand, the attacker moved aggressively across the environment. They assumed six different IAM roles across 14 sessions and compromised five IAM users, totaling 19 unique AWS principals. Session names revealed intent at each stage — "explore," "test," "pwned," "escalation," and notably "claude-session."

The attacker also created a persistence user named backdoor-admin with the AdministratorAccess policy attached.

One notable behavior during role assumption: the attacker attempted to use account IDs with ascending and descending digits (123456789012 and 210987654321) alongside a real external account ID. This pattern is consistent with AI hallucinations, providing further evidence of LLM-assisted operations.

LLMjacking via Amazon Bedrock

The attacker checked whether Bedrock model invocation logging was enabled — a behavior previously observed in LLMjacking attacks. Finding it disabled, they invoked multiple AI models including Claude Sonnet 4, Claude Opus 4, Claude 3.5 Sonnet, Claude 3 Haiku, DeepSeek R1, Llama 4 Scout, Amazon Nova Premier, Amazon Titan Image Generator, and Cohere Embed v3. Some Claude model invocations required accepting Marketplace agreements first.

A Terraform module found in the victim's S3 buckets revealed the attacker's intended persistence mechanism — a Lambda function with a publicly accessible URL (no authentication required) designed to generate Bedrock credentials on demand. The target user claude-bedrock-access was never created before access was revoked, indicating this backdoor was not deployed in time.

GPU Instance Abuse

The attacker attempted to launch a p5.48xlarge instance named "stevan-gpu-monster" five times, failing due to capacity constraints. They successfully launched a p4d.24xlarge instance ($32.77/hour, roughly $23,600/month) with eight NVIDIA A100 GPUs and a 2TB EBS volume.

A setup script found in the victim's S3 buckets included CUDA installation, PyTorch with A100 support, training libraries, and a publicly accessible JupyterLab server on port 8888 — providing backdoor access independent of AWS credentials. The script also referenced a non-existent GitHub repository (github.com/anthropic/training-scripts.git), another hallmark of LLM-generated code.

The instance was terminated after 5 minutes. Whether the goal was model training or reselling compute access remains unclear.

Defense Evasion

The attacker used an IP rotator tool, changing source IPs for each request to defeat correlation-based detection. Operations were distributed across 19 principals, and role chaining (assuming one role only to assume another) further complicated tracking.

Evidence of LLM-Assisted Operations

Multiple indicators point to AI-assisted execution throughout the attack. LLM-generated Lambda code with Serbian comments and comprehensive exception handling. Hallucinated AWS account IDs during role assumption. A non-existent GitHub repository in GPU setup scripts. The speed of iteration — eight minutes from credential theft to admin access including reconnaissance, code generation, and multiple attempts. Session names referencing "claude-session." As Sysdig notes, the hallucinations observed in this operation will become rarer as offensive AI agents improve their accuracy.

Attack Timeline

- 0:00 — Credentials extracted from public S3 buckets

- 0:06 — Failed admin role assumptions, succeeded with sysadmin/netadmin/account

- 0:08 — Lambda code injection, admin user

frickcompromised - 0:11 —

backdoor-adminuser created with AdministratorAccess - 0:58–1:51 — 13 Bedrock model invocations with cross-region inference

- 1:00 — Access keys created for BedrockFullAccess user and AzureADRoleManager

- 1:05–1:35 — Extensive reconnaissance across Secrets Manager, SSM, S3, ECS, Organizations, RDS, CloudWatch, Bedrock, OpenSearch, SageMaker, KMS

- 1:21 — Additional role assumptions (developer, external)

- 1:42 — GPU instance launched (p4d.24xlarge), terminated after 5 minutes

- 1:51 — Access revoked, attack ended

IOCs

IP Addresses (VPN):

104.155.129.177

104.155.178.59

104.197.169.222

136.113.159.75

34.173.176.171

34.63.142.34

34.66.36.38

34.69.200.125

34.9.139.206

35.188.114.132

35.192.38.204

34.171.37.34

204.152.223.172

34.30.49.235IP Addresses (Non-VPN):

103.177.183.165

152.58.47.83

194.127.167.92

197.51.170.131MITRE ATT&CK

T1078.004 — Valid Accounts: Cloud Accounts Initial access via credentials stolen from public S3 buckets

T1059.006 — Command and Scripting Interpreter: Python LLM-generated Python code injected into Lambda function

T1548 — Abuse Elevation Control Mechanism Privilege escalation via Lambda code injection exploiting attached execution role

T1098.001 — Account Manipulation: Additional Cloud Credentials Access keys created for existing admin users and new backdoor-admin user

T1580 — Cloud Infrastructure Discovery Extensive enumeration across 10+ AWS services

T1578 — Modify Cloud Compute Infrastructure GPU instance provisioning, security group creation, EBS snapshot sharing

T1496 — Resource Hijacking Bedrock model abuse (LLMjacking) and GPU compute provisioning

T1537 — Transfer Data to Cloud Account Scripts and instance data uploaded to victim's S3 buckets